Alright, since I clearly had this confused, help me walk through it:

There are

324,707,000,000 residents in the United States,

93% of which are citizens, meaning we are looking to disperse benefits to ~301,97,510,000 people.

Average family size in the US is

2.53, and the birthrate is

1.88. That’s the latest number, though, and won’t be reflected among older generations, so let’s tick it up a bit, and say that we have 119,358,699,605 families with a statistical 1.53 adults and 2 children.

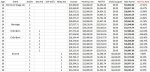

As I understand the program you are laying out, the bottom 39.9999% get the full benefit, it begins to phase out at 40%, and is completely gone at 70%.

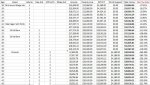

A statistically typical family getting the full benefit will get (2*4180 – you said 6080, but I think you meant the poverty line addition, which is 4180, and mistyped)+(1.53*12060)=$26,811.80.

If we take away 3.3333% of that benefit, starting at the 40% mark, then we will hit your goal of 0% by 70.

This means that you are taking away $893.64 for every additional percentage point that someone moves up relative to their peers in American income.

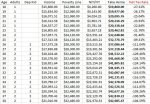

If we take a look at the income growth over

quintiles, for example, we can see how far American incomes move per percentage point, roughly, if we assume an even distribution. From the 40th to 60th percentile, for example, it moves about $16,165.00, or, about $808.25 per percentage point.

….meaning that “clawing back” $893.64 for every percentage point represents an effective tax rate of 110.56%. Meaning that we’ve created a

massive welfare cliff that runs across

30% of US income earners, effectively locking out all growth in income for the middle class, forever.

That’s, uh. That, I think, is not good?